My name is Kaz and I am a data analyst/methodologist in Washington DC area (USA).

My name is Kaz and I am a data analyst/methodologist in Washington DC area (USA).

I use this website for myself.

Topics include SAS & R programming, hierarchical linear modeling (HLM), Rasch model analysis, RCT (randomized control trial), QED (quasi-experimental design), PSM (propensity score matching), etc. I'm a certified What Works Clearinghouse reviewer (Group design 4.0). With my colleagues, I've designed and conducted many QEDs and RCTs.

I'm working on my R memo lately.

https://drive.google.com/file/d/1CBN0usv0-UBNexJGhqXSrnoSw9bL2F4Z/view?usp=sharing

I'm also slowly translating Takuboku Ishikawa's tanka's.

https://docs.google.com/document/d/1PgZ3TDqQxm9LVGqhJjH2sEFMlgUXWDeEuZAsuZnsb4k/edit?usp=sharing

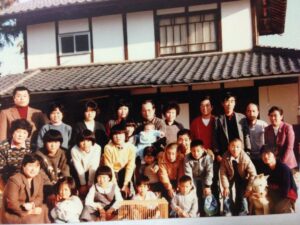

I'm originally from a small town Akitsu-cho (Hiroshima, Japan). I have about twenty five cousins. In this family reunion picture from 80s, I'm holding my dog. I believe this was taken in 1985 or 86, when I was prepping for college entrance exams.

I also found that my grandpa's cousin moved to Peru in 1900 and I have a massive number of Peruvian cousins (and a majority of them immigrated to Japan in 80s and 90s). I look forward to seeing them in Japan in the near future.

My hometown Akitsu-cho has a beautiful Sakura mountain park.

I can be reached by email (k u e k a w a AT GMAIL COM).

Affiliate links:

Etrade: https://t.co/fmYST7PpyX?amp=1

Wise Transfer: https://wise.com/invite/u/kazuakiu3

Kajabi: https://app.kajabi.com/r/mo4s2a46/t/dzlkzuvi

italki, my French teacher Valou

https://go.italki.com/Valou_Teacher

italki, my French teacher Inest

My italki generic affiliate link

https://go.italki.com/eigonodo

Other things.

https://app.beta.twittbot.net/